To run effective A/B tests, start by pinpointing a specific friction point and forming a data-backed hypothesis. Then test two variations of a page at the same time to see which performs better. Tools like Mouseflow can help you uncover exactly where users hesitate or drop off.

From there, build your variant, launch the experiment, and let it run until the results are statistically reliable.

No matter if you’re in marketing, CRO, or UX, A/B testing replaces opinions with evidence and shows you what actually drives results. Let’s break down the exact steps to turn your website guesswork into guaranteed growth.

“Now, I don’t run any tests unless they’re backed by some form of data. That was a huge mindset shift for me – from spaghetti testing to strategic testing by means of corroboration of data sources into strong hypotheses.”

What you need before your first test

You can’t just flip a switch and hope for the best. Set yourself up for testing success with these essentials:

- A quantitative testing tool: Use platforms like Varify or Optimizely to handle the actual traffic split.

- A qualitative analytics platform: You need to understand the “why” behind the “what.” Behavior analytics reveal real user struggles.

- Adequate website traffic: Tests require statistical significance. If your page only gets 10 visitors a day, focus on traffic acquisition first.

6 Steps to running A/B Tests

Now that you’ve got the basics in place, let’s get into it. Here’s a simple 6-step process to run A/B tests that actually move the needle.

Step 1: Start with a clear goal

Before you test anything, define what success looks like. That could be increasing demo requests, improving checkout completion, or getting more users to click a key call-to-action.

Without a clear goal, even a “winning” test doesn’t mean much. You need a single metric that tells you whether your variation actually improved performance.

Step 2: Find the friction with behavior analytics

The biggest mistake in A/B testing is testing random ideas. The best tests start with real friction.

This is where behavior data becomes essential. Instead of guessing, you look for where users drop off, hesitate, or get frustrated.

For example, you can use Mouseflow’s Conversion Funnels to see exactly where users abandon a signup or checkout flow. If a large percentage drops off at one step, that’s a strong signal something isn’t working.

From there, dig deeper. Friction Score highlights issues like rage clicks, dead clicks, and cursor thrashing. These are clear signs that users are confused or blocked. If you notice users repeatedly clicking a non-clickable element or struggling with a specific form field, you’ve likely found a high-impact testing opportunity.

If you’re not sure what to test yet, you can explore some examples of elements you can A/B test here.

At this stage, you’re not changing anything yet. You’re simply identifying where the problem is.

Step 3: Turn insights into a testable hypothesis

Once you’ve identified a friction point, the next step is to turn it into a clear hypothesis.

A good hypothesis connects a change to an expected outcome and explains why it should work. For example, if users are abandoning a form halfway through, your hypothesis might be that reducing the number of fields will increase completion rates because it lowers effort.

This step is what separates structured testing from guesswork. It forces you to be intentional about what you’re testing and what you expect to learn.

If you want to formulate a hypothesis, it usually has the following format:

We hypothesize that if we do A (change CTA copy on a landing page), we will get B (increase conversions to signups by 8%), because C (reason why it’s so, proven by data).

Step 4: Create your page variation

Now it’s time to build your challenger page. This is the variant that will go head-to-head against your existing website design (the control).

The key here is focus. If you change too many things at once, you won’t know what actually made the difference. In most cases, it’s better to test one meaningful change at a time, especially when you’re trying to learn what drives behavior.

That said, if you’re testing a larger concept like a full page redesign, grouping changes can make sense. The important part is knowing what kind of test you’re running: learning-focused or outcome-focused.

Step 5: Run the test and monitor behavior

Launch your test and split your traffic evenly between the control and the variation. From there, the goal is simple: let real users interact with both versions and collect enough data to make a reliable decision.

Ending a test too early is one of the most common mistakes. Early results can be misleading, so you need to wait until you have enough data to reach statistical confidence.

While the test is running, it can also be useful to observe how users behave on each version. For instance, by filtering session recordings by variant, you can see whether users interact with the new design the way you expected or whether new friction points appear.

Step 6: Analyze, implement and iterate

Once your test reaches statistical significance (usually 95%), it’s time to call it. Did your variant beat the control page?

If yes, push the winning design live to 100% of your traffic. If your variant lost, congratulations, you just saved your company from implementing a bad idea. Document the learnings, form a new hypothesis, and test again.

But don’t stop when you find a winner. A higher conversion rate is useful, but understanding why it improved is what makes A/B testing valuable long term.

Did users find the page clearer? Did they complete tasks faster? Did the change remove a point of confusion?

The deeper insight is what you can apply to future tests across your site.

Example of a simple A/B test with real impact

Sometimes the highest-impact tests aren’t that all complex.

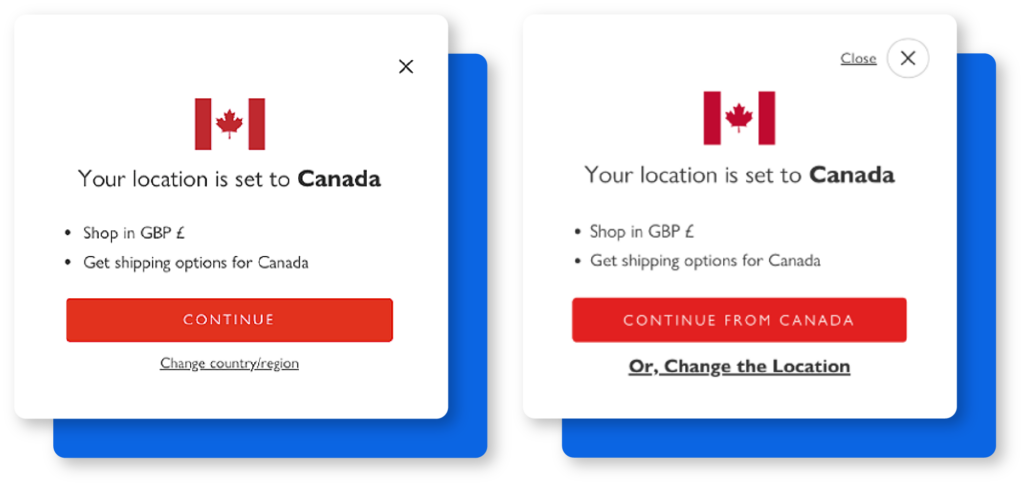

In a test run by Derek Rose, the team experimented with a location pop-up. The original version used a generic “Continue” CTA, while the variation made the action more explicit by changing it to “Continue from Canada” and clarifying the alternative option.

Derek Rose’s analysis of user behavior led to changing how the location pop-up worked

The change seems small, but it removes ambiguity. Users immediately understand what will happen when they click, and what their alternative is.

This resulted in a 37% increase in conversions.

This is a good reminder that effective A/B testing isn’t about dramatic redesigns, but about reducing friction and making decisions easier for users.

Common A/B testing mistakes to avoid

Even seasoned CRO specialists trip up occasionally. Keep these common pitfalls off your radar to ensure your data stays clean:

- Stopping tests too early: Don’t call a winner after three days just because the graph looks nice. Wait for true statistical significance.

- Testing micro-changes on low traffic: If your site doesn’t get thousands of views, a slightly lighter shade of blue won’t move the needle. Test bold, structural changes instead.

- Ignoring the “why”: Knowing a variant won is great. Knowing why it won helps you apply that learning across your entire website.

FAQs

The first step is always identifying a problem area on your website. Use behavior analytics tools to spot user drop-offs, then form a targeted hypothesis on how to fix it before building any variants.

Aim to run your test for at least two to four weeks. This ensures you capture behavior across different days of the week and reach a reliable 95% statistical significance.

You can, but you get better results when you pair it with tools such as Mouseflow. While A/B testing tools show you which version won, behavior analytics platforms show you exactly why users preferred it by capturing their actual interactions.

You generally need thousands of visitors per month to reach statistical significance quickly. If your traffic is lower, focus on testing massive, high-impact changes rather than minor design tweaks.